OVERVIEW

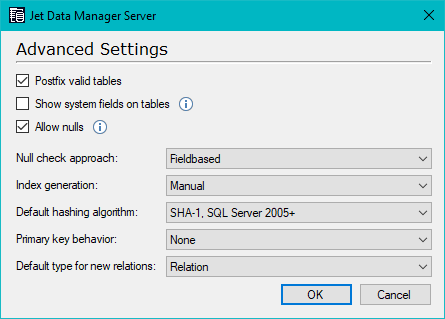

In the Jet Data Manager, you can configure what hashing algorithm the Jet Data Manager will use on both the project level and on the individual hashed fields.

Compatibility

For compatibility with old projects the algorithms used by earlier versions of Jet Data Manager are still available. Compared to the legacy algorithms the new algorithms have many advantages:

- Much faster: The new algorithms calculate hashes from binary data without performing any time consuming data conversions. Legacy algorithms, on the other hand, convert all data to text before calculating the hash.

- Much safer: The risk of getting the same hash from different data is much lower. The legacy algorithms concatenate the fields to be hashed into a string of no more than 4000 non-Unicode characters. This means that data types and any data past the limit is ignored by the hashing algorithm. When you need to hash a large amount of data, this increases the chance of clashes. The legacy hashing algorithms can also give identical hashing values for different SQL_Variant values. The new algorithms solve these potential issues and even overcomes the 8k byte limitation on older SQL Server versions.

- Can be nested: You can nest hashed fields with each other by calculating a hash from another hash field, which in turn can contain another hash field and so on. This also means that you can use hashed fields in history-enabled tables and combine history-enabled tables with junk dimension tables.

The following new hashing algorithms are available:

- SHA-1, SQL Server 2005 +: The default algorithm which is compatible with all versions of SQL Server supported by Jet Data Manager.

- SHA-1, SQL Server 2016 +(case sensitive or non): The fastest algorithm available in Jet Data Manager when the total amount of data to be hashed is more than 8000 bytes.

- SHA-2 512, SQL Server 2016 + (case sensitive or non): The safest algorithm available in terms of the risk of different data giving the same hash. Each field is 64 bytes, while the other algorithms are only 20 bytes.

- Plain Text (Debug): A string containing the fields that will be hashed. This is useful for debugging purposes, e.g. when you expect two hashed fields to contain the same value, but they do not.

When upgrading a project in Jet Data Manager from an older version the default hashing algorithm for the entire project is set to “Legacy binary”. All hashed fields in the project are set to “Use project default” so you can easily upgrade the project to one of the newer algorithms. The exception is junk dimension tables set to use integer hashes, those will be set to use the “Legacy integer” algorithm.

Because of the advantages listed above, we recommend that you upgrade existing projects to use one of the new algorithms by changing the project default.

Since all hash fields will be changed in the entire project at the same time in the end there will be no differences when comparing hash values between raw tables, valid tables, and transformation views. For the same reason, changing the hashing algorithm will not result in any data loss in your history tables nor improperly skip or include data in any type of incremental load.

Any hash fields in any tables (especially in history-enabled tables,) will be automatically recalculated when you change the hashing algorithm or make any changes to the selected fields. So there is no risk of losing history data or recording new unwanted changes in the history data.

Changing the hashing algorithm will not create new rows in your history enabled tables if the data fields selected in the hash fields remain unchanged. Incremental load is equally safe, which means that you can safely switch between different hashing algorithms.

Comments